In the rapidly evolving digital landscape, web scraping has emerged as a critical tool for data-driven decision-making. It enables businesses, researchers, and developers to extract valuable information from the vast expanses of the internet. However, the efficiency of web scraping is often challenged by technical complexities and anti-scraping measures implemented by websites.

This is where leveraging advanced AI technologies like ChatGPT, in conjunction with robust tools like Infatica's residential proxies, becomes crucial. This guide delves into how ChatGPT can be harnessed to maximize web scraping efficiency, offering insights into innovative strategies and best practices.

Understanding Web Scraping and Its Challenges

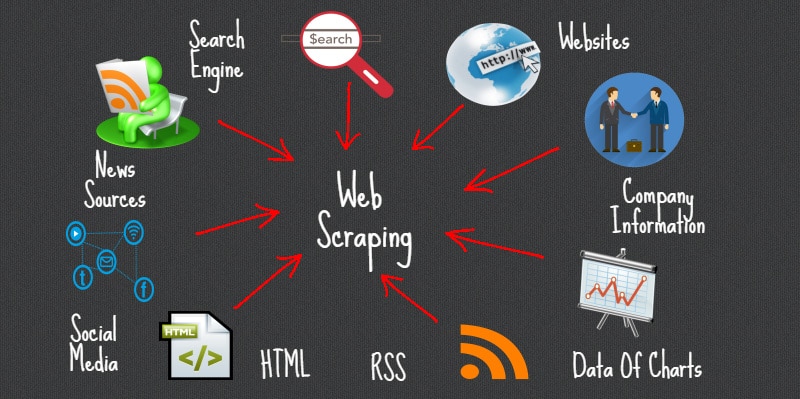

Web scraping is the process of automatically extracting data from websites. This technique is widely used for various purposes, including market research, price comparison, lead generation, and sentiment analysis.

The primary challenge in web scraping arises from the need to navigate through complex website structures and dynamically generated content. Additionally, many websites employ anti-scraping measures like CAPTCHAs, IP blocking, and rate limiting to prevent automated data extraction.

To counter these measures, web scrapers often use proxies. Infatica's residential proxies are particularly effective as they provide genuine residential IP addresses, reducing the likelihood of detection and blocking. These proxies enable scrapers to mimic the behavior of a regular user, thereby bypassing restrictions and accessing content more reliably.

The Role of ChatGPT in Enhancing Web Scraping

ChatGPT, a variant of the GPT (Generative Pretrained Transformer) models developed by OpenAI, offers significant advantages in web scraping. While it is not a scraping tool per se, ChatGPT can be integrated into the web scraping process to enhance its efficiency and effectiveness in several ways:

- Automated Query Generation: ChatGPT can generate contextually relevant search queries or form inputs, which can be used to automate and diversify the data extraction process.

- Data Parsing and Structuring: After extracting raw data, ChatGPT can assist in parsing and structuring it into a more usable format, such as categorizing information or extracting specific details from a bulk text.

- Handling CAPTCHAs and Interactive Elements: For websites with simple CAPTCHAs or interactive elements, ChatGPT can potentially generate responses, although this application is in a legal and ethical gray area and should be approached with caution.

- Enhancing the Quality of Scraped Data: ChatGPT can be used to refine and improve the quality of the scraped data by checking for inconsistencies, filling in missing information, or even correcting errors.

- Natural Language Processing (NLP) Applications: The model's advanced NLP capabilities can be leveraged to analyze and interpret the text data extracted from websites, providing deeper insights and understanding.

Integrating ChatGPT with Web Scraping Tools

Integrating ChatGPT into your web scraping toolkit involves a combination of programming and AI expertise. Here’s how you can go about it:

- Script Enhancement: Incorporate ChatGPT into existing Python scraping scripts (using libraries like BeautifulSoup or Scrapy) to handle complex tasks like data parsing and query generation.

- Building Intelligent Scraping Bots: Develop sophisticated scraping bots powered by ChatGPT that can adapt their scraping strategy based on the content type and structure of the target website.

- Data Post-Processing: Utilize ChatGPT in the post-processing stage to analyze, summarize, and transform the scraped data into actionable insights.

Best Practices for Efficient Web Scraping

While integrating ChatGPT can significantly enhance web scraping efficiency, adhering to best practices is crucial:

- Ethical Considerations: Always respect the privacy and terms of service of the target websites. Unauthorized scraping can lead to legal and ethical issues.

- Efficient Use of Proxies: Utilize Infatica's residential proxies effectively to mimic genuine user behavior and avoid detection.

- Rate Limiting: Implement rate limiting in your scraping scripts to prevent overloading the target website’s server.

- Regular Script Maintenance: Keep your scraping scripts and AI models updated to adapt to changes in website structures and scraping barriers.

- Data Management: Ensure efficient storage, handling, and processing of the scraped data, considering aspects like database management and data privacy.

Overcoming Challenges in Web Scraping with ChatGPT

Integrating ChatGPT into web scraping processes can present challenges that need to be navigated:

- Complex Integration: The process of integrating ChatGPT with existing web scraping frameworks can be technically challenging and may require advanced programming skills.

- Resource Intensiveness: Running sophisticated AI models like ChatGPT requires significant computational resources, which might increase operational costs.

- Staying Updated: The fields of AI and web scraping are constantly evolving, necessitating continuous learning and adaptation to new technologies and methods.

- Ethical and Legal Considerations: The use of AI in web scraping must be approached with an understanding of ethical and legal implications, especially regarding data privacy and usage.

Conclusion

The integration of ChatGPT into web scraping practices offers a pathway to more efficient, sophisticated, and effective data extraction techniques. By leveraging its capabilities in query generation, data parsing, and overcoming anti-scraping measures, businesses and researchers can unlock new potentials in data gathering and analysis.

However, it is imperative to navigate this integration with a strong adherence to ethical and legal standards. As AI continues to advance, its role in enhancing web scraping and data analysis will undoubtedly grow, offering exciting opportunities for innovation in the field of data-driven decision-making.